Deckō

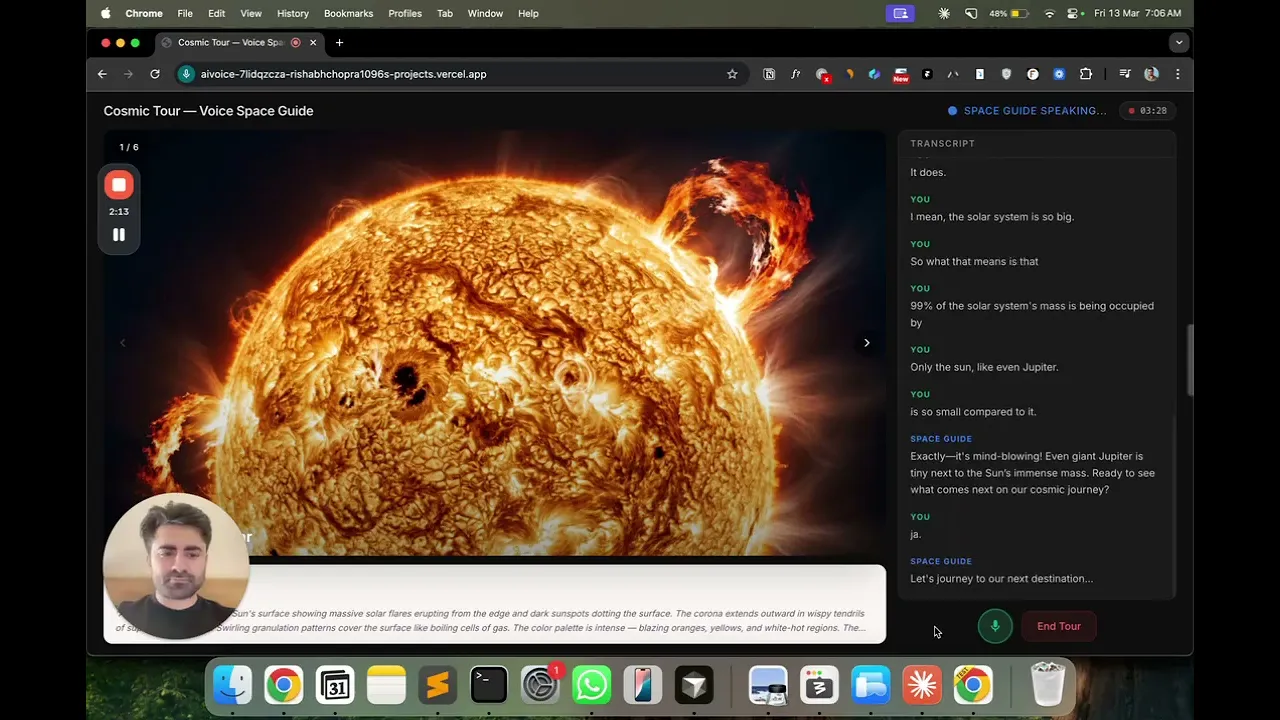

You can talk to your slide show now. Dig deep into a specific slide, not skip some. The AI understands conversational cues and knows when to move to the next slide automatically.

When you're at a museum and the guide finishes explaining a painting, you don't say "next painting please." You say "huh, cool" or "got it," and they just move on because they can read when you're done.

So I tried to build that: an AI that narrates a slideshow and knows when you're finished without you explicitly telling it.

First version was a disaster. The AI would advance mid-sentence if you said "okay" while you were still thinking. Or it would wait awkwardly forever because you hadn't said the magic words.

The fix wasn't adding more AI capability — it was better prompt engineering. I gave the model two tools: next_slide() and go_to_slide(n). Then I wrote a system prompt that said: advance when someone gives casual closure signals like "got it" or "interesting." Don't advance when they're actively asking questions. And if they say "take me to Saturn," just jump there.

The moment I knew it worked: I was on a slide about Earth and said "actually, I already know about Earth — show me something I don't know." It skipped three slides to Saturn. No explicit command. It just understood what I meant.

This is really a UX problem disguised as an AI problem. The hard part isn't making AI understand language — it's knowing when to move on versus when someone is still thinking. Get it wrong, you interrupt people mid-thought. Get it right, it feels like talking to someone who actually listens.